Google’s premier cloud computing AI conference, Google Cloud Next 2023, took place the last week of August at Moscone Center in San Francisco. I attended the event and had the opportunity to spend several days in a variety of keynotes, briefings, sessions, as well as explore the event’s expo floor. Of course, I shared some of my real-time observations via Twitter X, which you can check out here. Here, I’ll share a few of my key takeaways from the event.

This was the first in-person Google Cloud Next event in three years. While the event felt a lot smaller and more compact than the last one I attended, it was still large for a post-pandemic conference with approximately 15,000 attendees present.

Generative AI in Focus

No surprise here, but Generative AI was very much a key theme flowing throughout the event, though there was plenty of content for folks more interested in traditional cloud computing topics.

In addition to enabling new features and capabilities for the company’s the core AI stack (AI-oriented infrastructure and accelerators, AI/ML/DS platforms, and AI-powered applications), Google is weaving generative AI into non-AI products through Duet AI, which adds AI-bases assistant technologies to a wide range of Google Cloud products.

A good indication of the breadth of work they’ve done to quickly build generative AI into their product base can be seen in the many AI-related announcements they made during the event. Here’s a summary of the most interesting AI-focused ones, out of the full list of 161 noted in Alison Wagonfeld’s wrap-up post:

- Duet AI in Google Cloud is now in preview with new capabilities, and general availability coming later this year. There were a dozen more announcements covering Duet AI features for specific Google Cloud tools, but you can check out the blog post for a summary.

- Vertex AI Search and Conversation, formerly Enterprise Search on Generative AI App Builder and Conversational AI on Generative AI App Builder, are both now generally available.

- Google Cloud added new models to Vertex AI Model Garden including Meta’s Llama 2 and Code Llama and Technology Innovation Institute’s Falcon LLM, and pre-announced Anthropic’s Claude 2.

- The PaLM 2 foundation model now supports 38 languages, and 32,000-token context windows that make it possible to process long documents in prompts.

- The Codey chat and code generation model offers up to a 25% quality improvement in major supported languages for code generation and code chat.

- The Imagen image generation model features improved visual appeal, image editing, captioning, a new tuning feature to align images to guidelines with 10 or fewer samples, and visual questions and answering, as well as digital watermarking functionality powered by Google DeepMind SynthID.

- Adapter tuning in Vertex AI is generally available for PaLM 2 for text. Reinforcement Learning with Human Feedback (RLHF) is now in public preview.

- New Vertex AI Extensions let models take actions and retrieve specific information in real time and act on behalf of users across Google and third-party applications like Datastax, MongoDB and Redis. New Vertex AI data connectors help ingest data from enterprise and third-party applications like Salesforce, Confluence, and JIRA.

- Vertex AI now supports Ray, an open-source unified compute framework to scale AI and Python workloads.

- Google Cloud announced Colab Enterprise, a managed service in public preview that combines the ease-of-use of Google’s Colab notebooks with enterprise-level security and compliance support capabilities.

- Next month Google will make Med-PaLM 2, a medically-tuned version of PaLM 2, available as a preview to more customers in the healthcare and life sciences industry.

- New features to enhance MLOps for generative AI, including Automatic Metrics in Vertex AI to evaluate models based on a defined task and “ground truth” dataset, and Automatic Side by Side in Vertex AI, which uses a large model to evaluate the output of multiple models being tested, helping to augment human evaluation at scale, and a new generation of Vertex AI Feature Store, now built on BigQuery, to help avoid data duplication and preserve data access policies.

- Now Vertex AI foundation models, including PaLM 2, can be accessed directly from BigQuery. New model inference in BigQuery lets users run model inferences across formats like TensorFlow, ONNX, and XGBoost, and new capabilities for real-time inference can identify patterns and automatically generate alerts. Vector and semantic search for model tuning now supported in BigQuery. You also can automatically synchronize vector embeddings in BigQuery with Vertex AI Feature Store for model grounding.

- A3 VMs, based on NVIDIA H100 GPUs and delivered as a GPU supercomputer, will be generally available next month. The new Google Cloud TPU v5e, in preview, has up to 2x higher training performance per dollar and up to 2.5x inference performance per dollar for LLMs and generative AI models compared to Cloud TPU v4. New Multislice technology in preview lets you scale AI models beyond the boundaries of physical TPU pods, with tens of thousands of Cloud TPU v5e or TPU v4 chips. Support for Cloud TPUs in GKE is now available for Cloud TPU v5e and Cloud TPU v4. Support for AI inference on Cloud TPUs is also in preview. GKE now supports Cloud TPU v5e, A3 VMs with NVIDIA H100 GPUs, and Google Cloud Storage FUSE on GKE (GA).

Key Takeaways

My takeaways from Google Cloud Next are very much in the same vein as those from my attendance at Google’s Cloud Executive Forum held earlier in the summer.

I continued to be impressed with Google Cloud’s velocity and focus when it comes to attacking the opportunity presented by generative AI. The company clearly sees gen AI as a way to leap ahead of competitors AWS and Microsoft and is taking an “all in” approach.

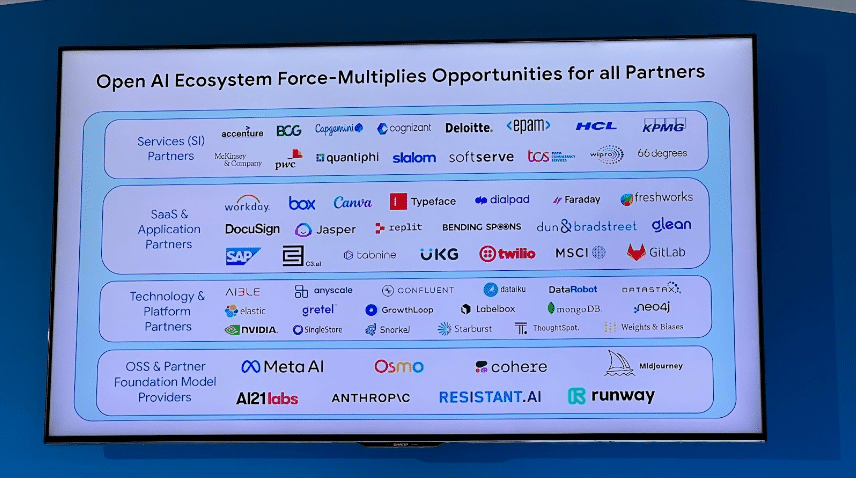

The company has also been very quick to rally customers around its new gen AI product offerings. In addition to the product announcements noted above, Google Cloud announced and highlighted new and expanded generative-AI-focused collaborations with a wide variety of customers and partners, including AdoreMe, Anthropic, Bayer Pharmaceuticals, Canoo, Deutsche Bank, Dun & Bradstreet, Fox Sports, GE Appliances, General Motors, Ginkgo Bioworks, Hackensack Meridian Health, HCA Healthcare, Huma, Infinitus, Meditech, MSCI, NVIDIA, Runway, Six Flags, eleven generative AI startups, DocuSign, SAP, and more.

Interesting overview of @FOXSports use of Gen AI. Have 27 PB of video, ingest 10k hrs per month. Have custom models for things like celebrity detection, foul ball prediction, and more. Use the tech to allow analysts to more easily search archives. #GoogleCloudNext pic.twitter.com/ea3tQCVXU0

— Sam Charrington (@samcharrington) August 29, 2023

AI-Driven Transformation panel at #googlecloudnext Analyst Summit featuring data leaders from @Snap and @Wayfair. pic.twitter.com/aANlHv6nHT

— Sam Charrington (@samcharrington) August 29, 2023

Next up, fireside with Deutsche Bank Chief Innovation Officer Gil Perez and @googlecloud VP @juniebugca. Talking AI driven transformation in a highly regulated industry. pic.twitter.com/OC5RMUWGiQ

— Sam Charrington (@samcharrington) August 29, 2023

A. Scalability. Want to see use cases that impact across all of the bank.

— Sam Charrington (@samcharrington) August 29, 2023

B. Responsible velocity. Want to move fast in responsible/AI manner. Target doubling velocity each year.

C. Self-sufficiency. Want to drive towards ability to use and operate gen AI tech on their own.

“For the first time, the business is really engaged in transformation… We will figure out hallucinations, omissions, etc., … but the level of engagement is game changing.”

- Gil Perez, Chief Innovation Officer, Deutsche Bank

Additionally, Google Cloud continues to grow their generative AI ecosystem, announcing availability of Anthropic’s Claude2 and Meta’s Llama2 & CodeLlama models in the Vertex AI Model Garden.

TK highlighting breadth of model catalog in Vertex AI, via new and existing model partners. Announcing support for @AnthropicAI Claude2 and @MetaAI Llama2 and CodeLlama models. #googlecloudnext pic.twitter.com/E1gkpT59UA

— Sam Charrington (@samcharrington) August 29, 2023

Opportunities

Numerous opportunities remain for Google Cloud, most notably in managing complexity in both their messaging and communication as well as in the products themselves.

From a messaging perspective, with so many new ideas to talk about, it is not always clear what is actually a new feature or product capability, vs. simply a trendy topic that the company wants to be able to talk about. For example, the company mentioned new Grounding features for LLMs numerous times but I’ve been unable to find any concrete detail about how new features enable this on the platform. The wrap-up blog post noted previously links to an older blog post on the broader topic of using embeddings to ground LLM output using 1st party and 3rd party products. It’s a nice resource but not really related to any new product features.

And since the conference, I’ve spent some time exploring various Vertex AI features and APIs and generally still find the console and example notebooks a bit confusing to use and the documentation a bit inconsistent. To be fair, these complaints could be leveled at any of Google Cloud’s major competitors as well, but coming from an underdog position in the cloud computing race, Google has the most to lose if product complexity makes switching costs too high.

Nonetheless, I’m looking forward to seeing how things evolve for Google Cloud over the next few months. In fact, we won’t need to wait a full year for updates, since Google Cloud Next ‘24 will take place in the spring, April 9-11, in Las Vegas.