I recently had the opportunity to attend the Google Cloud Executive Forum, held at Google’s impressive new Bay View campus, in Mountain View, California. The Forum was an invitation-only event that brought together CIOs and CTOs of leading companies to discuss Generative AI and showcase Google Cloud’s latest advancements in the domain. I shared my real-time reactions to the event content via Twitter, some of which you can find here. (Some weren’t hash-tagged, but you can find most by navigating the threads.) In this post I’ll add a few key takeaways and observations from the day I spent at the event.

Key Takeaways

Continued product velocity

Google Cloud has executed impressively against the Generative AI opportunity, with a wide variety of product offerings announced at the Google Data Cloud & AI Summit in March and at Google I/O in May. These include new tools like Generative AI Studio and Generative AI App Builder; models like PaLM for Text and Chat, Chirp, Imagen, and Codey; Embeddings APIs for Text and Images; Duet AI for Google Workspace and Google Cloud; new hardware offerings; and more.

The company took the opportunity of the Forum to announce the general availability of Generative AI Studio and Model Garden, both part of the Vertex AI platform, as well as the pre-order availability of Duet AI for Google Workspace.

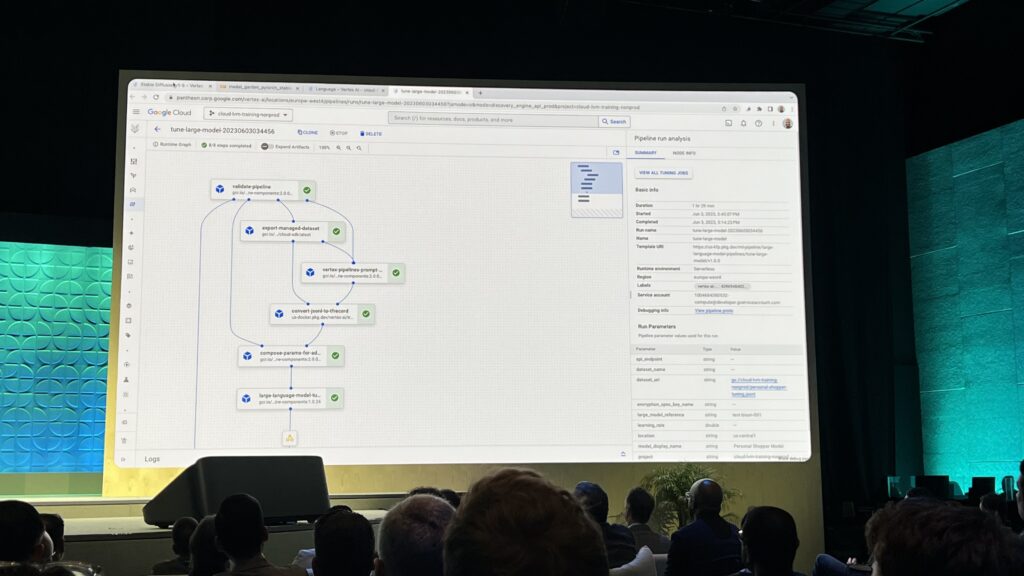

Nenshad Bardoliwalla, product director for Vertex AI, delivered an impressive demo showing one-click fine tuning and deployment of foundation models on the platform.

Considering that the post-ChatGPT Generative AI wave is only six months old, Google’s ability to quickly get Gen AI products out the door and into customer hands quickly has been noteworthy.

Customer and partner traction

Speaking of customers, this was another area where Google Cloud’s performance has been impressive. The company announced several new Generative AI customer case studies at the Forum, including Mayo Clinic, GA Telesis, Priceline, and PhotoRoom. Executives from Wendy’s, Wayfair, Priceline and Mayo participated in an engaging customer panel that was part of the opening keynote session. Several other customers were mentioned during various keynotes and sessions, as well as in private meetings I had with Google Cloud execs.

See my Twitter thread for highlights and perspectives from the customer panel, which shared interesting insights about how those orgs are thinking about generative AI.

Strong positioning

While Models Aren’t Everything™, in a generative AI competitive landscape in which Microsoft’s strategy is strongly oriented around a single opaque model (ChatGPT via its OpenAI investment) and AWS’ strategy is strongly oriented around models from partners and open source communities, Google Cloud is promoting itself as a one-stop shop with strong first party models from Google AI, support for open source models via its Model Garden, as well as partnerships with external research labs like AI21, Anthropic and Cohere.

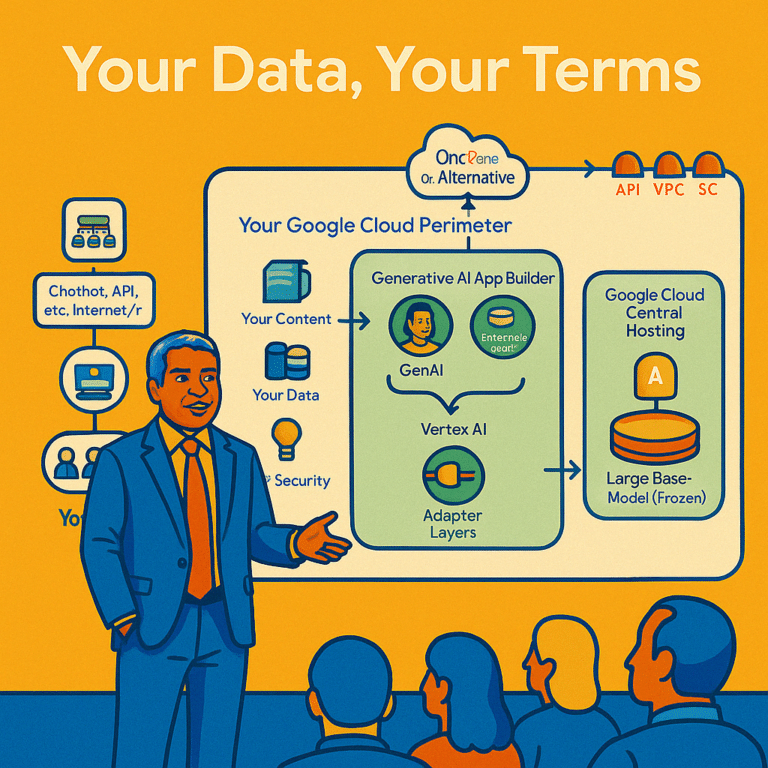

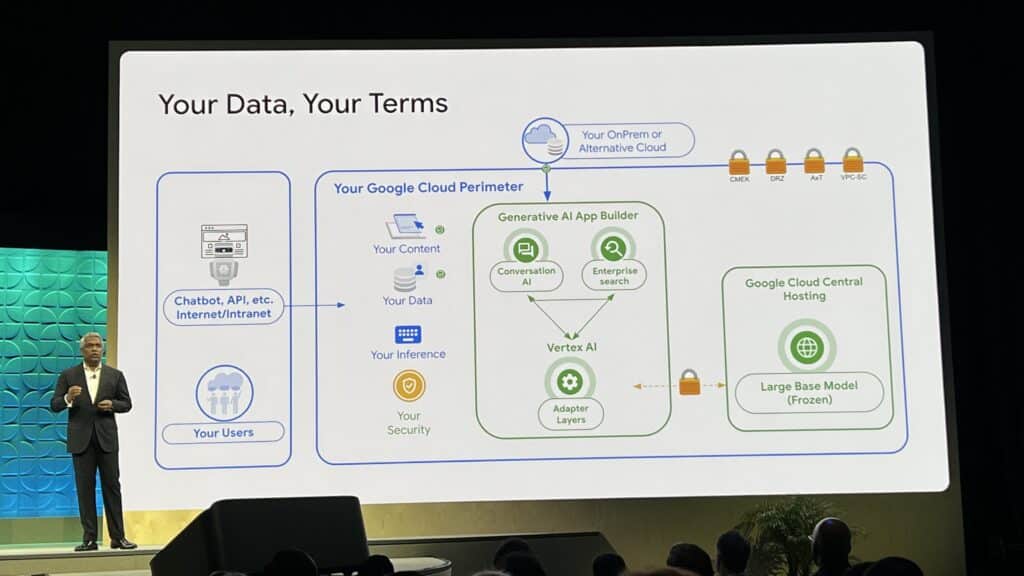

The company also demonstrates a strong understanding of enterprise customer requirements around generative AI, with particular emphasis on data and model privacy, security and governance.

The company’s strategy will continue to evolve and unfold in the upcoming months and much more will be discussed at Google Cloud Next in August, but I liked what I heard from product leaders at the event about the direction they’re heading. One hint: they have some strong ideas about how to address hallucination, which is one of the biggest drawbacks to enterprise use of large language models (LLMs). I don’t believe that hallucinations by LLMs can ever be completely eliminated, but in the context of a complete system with access to a comprehensive map of the world’s knowledge, there’s a good chance that the issue can be sufficiently mitigated to make LLMs useful in a wide variety of customer-facing enterprise use cases.

Complex communication environment and need to educate

In his opening keynote to an audience of executives, TK introduced concepts like reinforcement learning from human feedback, low-rank adaptation, synthetic data generation, and more. While impressive, and to some degree an issue of TK’s personal style, it’s also a bit indicative of where we are in this market that we’re talking to CIOs about LoRA and not ROI. This will certainly evolve as customers get more sophisticated and use cases get more stabilized, but it’s indicative of the complex communication challenges Google faces in evangelizing highly technical products in a brand new space to a rapidly growing audience.

This also highlights the need for strong customer and market education efforts, to help bring all the new entrants up to speed. To this end, Google Cloud announced new consulting offerings, learning journeys, and reference architectures at the Forum to help customers get up to speed. (To add to the training courses announced at I/O).

I also got to chat 1:1 with one of their “black belt ambassadors,” part of a team they’ve put in place to help support the broader engineering, sales and other internal teams at the company.

Overall, I think the company’s success will be in large part dependent on their effectiveness at helping to bring these external and internal communities up to speed on Generative AI.

Broad range of attitudes

A broad range of attitudes about Generative AI was present at the event. On the one hand there was what I took as a very healthy “moderated enthusiasm” on the part of some. Wayfair CTO Fiona Tan exemplified this perspective both in her comments on the customer panel and in our lunch discussion. She talked about the need to manage “digital legacy” and the importance of platform investments, and was clear in noting that many of the company’s early investments in generative AI were experiments (e.g. a stable-diffusion based room designer they’re working on).

On the other hand, there were comments clearly indicative of “inflated expectations,” like those of another panelist who speculated that using code generation would allow enterprises to reduce the time it takes to build applications from six weeks to two days or those of a fellow analyst who proclaimed that generative AI was the solution to healthcare in America. The quicker we get everyone past this stage the better.

For its part, Google Cloud did a good job navigating this communication challenge by staying grounded on what real companies were doing with its products.

I’m grateful to the Google Cloud Analyst Relations team for bringing me out to attend the event. Disclosure: Google is a client.