Earlier this summer I posted about my daughter Malaika’s internship with TWIML, exploring the use of machine learning services to transcribe the podcast. She’s off enjoying her freshman year at college now, but she posted an update on her project a few weeks ago that I thought I’d share here. If you’re interested in the state of cognitive services like the Google Speech-to-Text API, you’ll undoubtedly find some of her observations interesting.

I’ve been fortunate that the project has since been picked up by Insight AI Fellow Andrew Vold. You may recall from my interview with Insight’s Ross Fadely that their programs seek to bridge the gap between academia and careers in AI, data science, data engineering, DevOps engineering, health data, and data product management (whew, that list has gotten longer since I chatted with Ross) for graduating PhDs.

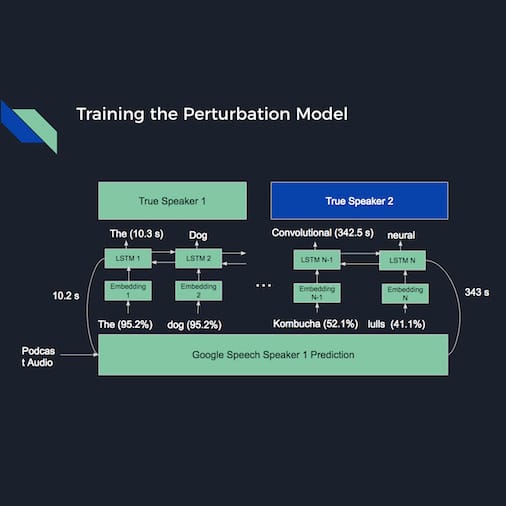

As part of their programs, Insight pairs fellows with practical projects from industry and Andrew, who just finished his Ph.D. in experimental particle physics at the University of Minnesota, graciously selected ours to work on. ???? He’s calling the project StenoPod and will be using word embeddings and an LSTM to correct transcription and speaker identification errors in the results provided by the transcription API.

Thanks to Emmanuel at Insight for encouraging our proposal, Insight AI for accepting it, and Andrew for choosing it. Andrew will be looking for his next opportunity when the program ends in a few weeks, so if you’re hiring let me know and I’ll connect you with the team at Insight.

And if you’re interested in continuing work on this project when Andrew moves on, let me know. It’s a fun and interesting project with lots of learning opportunities.

Sign up for our Newsletter to receive this weekly to your inbox.