The issue of bias in AI was the subject of much discussion in the AI community last week. The publication of PULSE, a machine learning model by Duke University researchers, sparked a great deal of it. PULSE proposes a new approach to the image super-resolution problem, i.e. generating a faithful higher-resolution version of a low-resolution image.

In short, PULSE works by using a novel technique to efficiently search space of high-resolution artificial images generated using a GAN and identify ones that are downscale to the low-resolution image. This is in contrast to previous approaches to solving this problem, which work by incrementally upscaling the low-resolution images and which are typically trained in a supervised manner with low- and high-resolution image pairs. The images identified by PULSE are higher resolution and more realistic than those produced by previous approaches, and without the latter’s characteristic blurring of detailed areas.

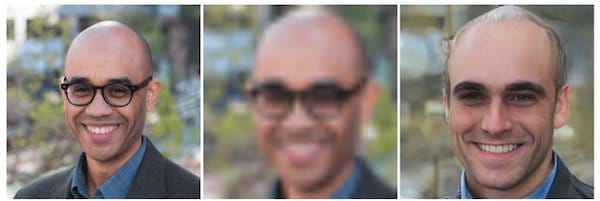

However, what the community quickly identified was that the PULSE method didn’t work so well on non-white input images. An example using a low res image of President Obama was one of the first to make the rounds, and Robert Ness used a photo of me to create this example:

I’m going to skip a recounting of the unfortunate Twitter firestorm that ensued following the model’s release. For that background, Khari Johnson provides a thoughtful recap over at VentureBeat, as does Andrey Kurenkov over at The Gradient.

Rather, I’m going to riff a bit on the idea of where bias comes from in AI systems. Specifically, in today’s episode of the podcast featuring my discussion with AI Ethics researcher Deb Raji I note, “I don’t fully get why it’s so important to some people to distinguish between algorithms being biased and data sets being biased.”

Bias in AI systems is a complex topic, and the idea that more diverse data sets are the only answer is an oversimplification. Even in the case of image super-resolution, one can imagine an approach based on the same underlying dataset that exhibits behavior that is less biased, such as by adding additional constraints to a loss or search function or otherwise weighing the types of errors we see here more heavily. See AI artist Mario Klingemann’s Twitter thread for his experiments in this direction.

Not electing to consider robustness to dataset biases is a decision that the algorithm designer makes. All too often, the “decision” to trade accuracy with regards to a minority subgroup for better overall accuracy is an implicit one, made without sufficient consideration. But what if, as a community, our assessment of an AI system’s performance was expanded to consider notions of bias as a matter of course?

Some in the research community choose to abdicate this responsibility, by taking the position that there is no inherent bias in AI algorithms and that it is the responsibility of the engineers who use these algorithms to collect better data. However, as a community, each of us, and especially those with influence, has a responsibility to ensure that technology is created mindfully, with an awareness of its impact.

On this note, it’s important to ask the more fundamental question of whether a less biased version of a system like PULSE should even exist, and who might be harmed by its existence. See Meredith Whittaker’s tweet and my conversation with Abeba Birhane on Algorithmic Injustice and Relational Ethics for more on this.

A full exploration of the many issues raised by the PULSE model is far beyond the scope of this article, but there are many great resources out there that might be helpful in better understanding these issues and confronting them in our work.

First off there are the videos from the tutorial on Fairness Accountability Transparency and Ethics in Computer Vision presented by Timnit Gebru and Emily Denton. CVPR organizers regard this tutorial as “required viewing for us all.”

Next, Rachel Thomas has composed a great list of AI ethics resources on the fast.ai blog. Check out her list and let us know what you find most helpful.

Finally, there is our very own Ethics, Bias, and AI playlist of TWIML AI Podcast episodes. We’ll be adding my conversation with Deb to it, and it will continue to evolve as we explore these issues via the podcast.

I’d love to hear your thoughts on this.

(Thanks to Deb Raji for providing feedback and additional resources for this article!)