It’s mighty fine, your newsletter number nine!

Putting the Adversarial in Adversarial

Last week I noted that the term “adversarial” is connected to two hot areas of interest and research in machine learning and AI. I provided an introduction to the first of these, adversarial training, which was the topic of our first TWIML Online Meetup.

The second area is a bit more, well, adversarial. I’m speaking here of adversarial attacks on machine learning models.

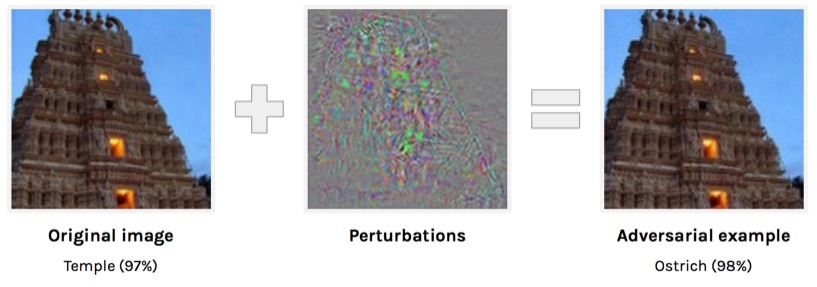

Let’s say we’ve trained a deep neural network to label photos based on what’s in them, e.g. a dog, cats, school bus, building, etc. Once our network is trained, we put it out in the field and throw images at it to classify. An adversarial attack on this model is the process of designing images (adversarial examples) that will cause the model to make a predictable mistake in the label that it predicts.

In the case of images, this is usually done by injecting small changes into the input image that doesn’t change the way it’s perceived by humans, but do change the way it’s perceived by models.

There are a few commonly cited examples of this effect. This is one of my favorites:

Holy perturbations, Batman! With the right kind of attack, we can make the model classify this temple as an ostrich!

Holy perturbations, Batman! With the right kind of attack, we can make the model classify this temple as an ostrich!

This one is also cool. The folks at OpenAI created an attack that will cause a picture of a kitten to register as a desktop computer or monitor, even when the image is rotated or zoomed.

Adversarial attacks on machine and deep learning will be an important area to watch. With rumors pointing to an iPhone 8 that ditches the fingerprint reader for an image-based unlock mechanism based on facial scans, the implications of these kinds of attacks on security become obvious. And, there are many other areas in which automated decisions are made based on imagery, including web content moderation (Not Hotdog) and self-driving cars.

Adversarial attacks on machine and deep learning will be an important area to watch. With rumors pointing to an iPhone 8 that ditches the fingerprint reader for an image-based unlock mechanism based on facial scans, the implications of these kinds of attacks on security become obvious. And, there are many other areas in which automated decisions are made based on imagery, including web content moderation (Not Hotdog) and self-driving cars.

I’d like to explore this area more fully on the podcast and/or meet up at some point, so stay tuned!